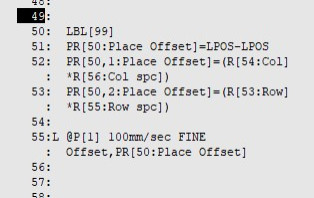

I like rows/columns *Row spacing/column spacing for offset calculations. Only 1 taught point.

But I can't come up with a matrix for your picking sequence, other than a hard coding.

Assuming the Yellow is the "row number"

and the pink is "column number"

1: LBL[1] ;

2: IF R[51:Place#]<>1,JMP LBL[2] ;

3: R[53:Row]=0 ;

4: R[54:Col]=0 ;

5: JMP LBL[99] ;

6: ;

7: LBL[2] ;

8: IF R[51:Place#]<>2,JMP LBL[3] ;

9: R[53:Row]=1 ;

10: R[54:Col]=0 ;

11: JMP LBL[99] ;

12: ;

13: LBL[3] ;

14: IF R[51:Place#]<>3,JMP LBL[4] ;

15: R[53:Row]=0 ;

16: R[54:Col]=1 ;

17: JMP LBL[99] ;

18: ;

19: LBL[4] ;

20: IF R[51:Place#]<>4,JMP LBL[5] ;

21: R[53:Row]=2 ;

22: R[54:Col]=0 ;

23: JMP LBL[99] ;

24: ;

25: LBL[5] ;

26: IF R[51:Place#]<>5,JMP LBL[6] ;

27: R[53:Row]=1 ;

28: R[1:Stop]=0 ;

29: JMP LBL[99] ;

30: ;

31: LBL[6] ;

32: IF R[51:Place#]<>6,JMP LBL[7] ;

33: R[53:Row]=3 ;

34: R[54:Col]=0 ;

35: JMP LBL[99] ;

36: ;